I Spent 6 Months Building a WarcraftLogs Competitor — Solo, on AWS Serverless

Reverse-engineering an undocumented data format. Cutting AI costs by over 95% through four rounds of optimization. Shipping features in 3 days because a tester asked. Here's what I learned.

Brian Morale

Founder & Chief Architect

I Spent 6 Months Building a WarcraftLogs Competitor — Solo, on AWS Serverless

TL;DR: 20+ years as an enterprise architect. Built a complete World of Warcraft combat log analysis platform with AI coaching — solo, on nights and weekends. AWS serverless (.NET on Lambda), 41 DynamoDB tables, AI costs cut by over 95% through four rounds of optimization. The platform is wowcoach.gg.

How This Started

I've played World of Warcraft for years. If you don't play — the short version is that 20 people get together, fight a boss for 6–10 minutes, and usually die. Then you do it again. Dozens of times. The game generates combat logs during these fights: massive text files containing every ability cast, every damage number, every heal, every death, with millisecond timestamps. After a night of wiping, you upload your log to an analysis tool and try to figure out what went wrong.

The problem is that the existing tools give you data. Charts, timelines, damage meters. What they don't give you is answers. Most players stare at a wall of numbers and still have no idea why they wiped. I wanted something different — a platform where you could ask, in plain English, "why did we wipe?" and get a real answer. Not "do better on mechanics." Something like: "Your tank died at 3:47 because they took 340k damage in 2.1 seconds from a debuff that wasn't dispelled. Your priest had Dispel Magic available but cast Prayer of Healing instead." (For the longer version of this argument, see the death of manual log review.)

I'm a software architect by day — 20+ years building enterprise systems, the last several focused on AWS serverless. How hard could it be to add some AI on top of combat logs?

Famous last words.

Getting there took six months, a custom combat log parser built from scratch, a 100x AI cost reduction, real-time collaborative features I never planned, a Texas LLC, a lost EIN, and a lawyer. Let me explain.

The Rabbit Hole: Building the Parser

The plan was simple: take combat log data, pipe it to an AI, get coaching. I figured I'd use the existing analysis platform's API for the data and focus on the AI layer.

Then I started looking at the API. Limitations on what data I could access. Rate limits. Terms of service restrictions. Dependency on a platform I didn't control. If they changed their API or decided they didn't like what I was building, I'd be dead in the water.

So I decided to build the parser myself.

This is the part where a sane person would have stopped. Blizzard's combat log format is effectively undocumented. Each line is a comma-separated event — timestamps, player IDs, ability names, damage values, coordinates — and the field layout changes between expansions, sometimes between patches. A single line looks like this:

8/18 21:47:12.841 SPELL_DAMAGE,Player-1168-0A234B,"Darkslash",0x514,

Creature-0-4232-2662-31585-214502,"Nalthor",0xa48,343293,"Sever",0x1,

4584072,5666400,0,0,3863,62613,62613,-1,1,0,0,nil,nil,nil

There are dozens of event types, each with different field layouts. Some fields are hexadecimal flags encoding multiple boolean states. Some values that look like damage numbers are actually resource states. The only way to figure it out is to generate logs, stare at them, cross-reference with in-game behavior, and iterate.

I spent weeks doing this. Weeks I could have spent building features.

Then came the accuracy chase. The incumbent tool has been parsing these logs for over a decade with millions of uploads. Players treat its numbers as gospel. If my numbers don't match, I'm the one who's wrong — even if I'm not. So I'd find a discrepancy, spend hours debugging, trace it to a difference in how we handle overkill damage or pet attribution or absorb shield accounting, and sometimes discover that the difference was on their side.

The breakthrough was accepting that the incumbent isn't trusted because it's perfect — it's trusted because it's the standard. I hit 98.99% parity and stopped chasing ghosts.

I also hit a performance milestone that made it all feel worth it: I optimized the parser from 123 seconds to parse a 4-hour raid file down to 25.9 seconds — 132,000 lines per second — while maintaining that accuracy.

How You Parse a 2GB File on Lambda

Here's the technical problem that made this interesting. A full raid night produces 800MB–2GB of raw text. Lambda tops out at 10GB of memory, and you need headroom for the .NET runtime and all the intermediate data structures. You can't just load the file and parse it. You also can't truncate — players expect analysis of their entire session.

The solution is a two-pass streaming architecture:

Pass 1: Boundary Detection. Stream the entire file line-by-line from S3 without storing content. Just detect boundaries — zone changes, dungeon starts, boss encounter starts and ends. Output: a list of byte offset ranges, one per encounter. Memory footprint: about 100KB regardless of file size.

Pass 2: Run-by-Run Processing. For each boundary, use S3 range requests to fetch only that encounter's bytes. Parse it fight-by-fight. After each fight is parsed, convert to Parquet, write to S3, release the memory. Move to the next fight.

This converts a 2GB memory problem into a series of 50–200MB problems, each processed and discarded before the next one starts. When metadata accumulates past 2.5GB of heap — which happens on 100+ fight raid nights — fight data spills to Lambda's 10GB ephemeral disk as compressed files, reloaded on demand.

The result: 2GB files parse reliably on a single Lambda invocation in under 30 seconds. No servers, no containers, no orchestration. One function.

The $0.20 Problem

With the parser working, I wired up the AI coaching layer. The first implementation was straightforward: stuff all the fight data into a prompt, add the user's question, send it to Claude Sonnet on AWS Bedrock, get a response.

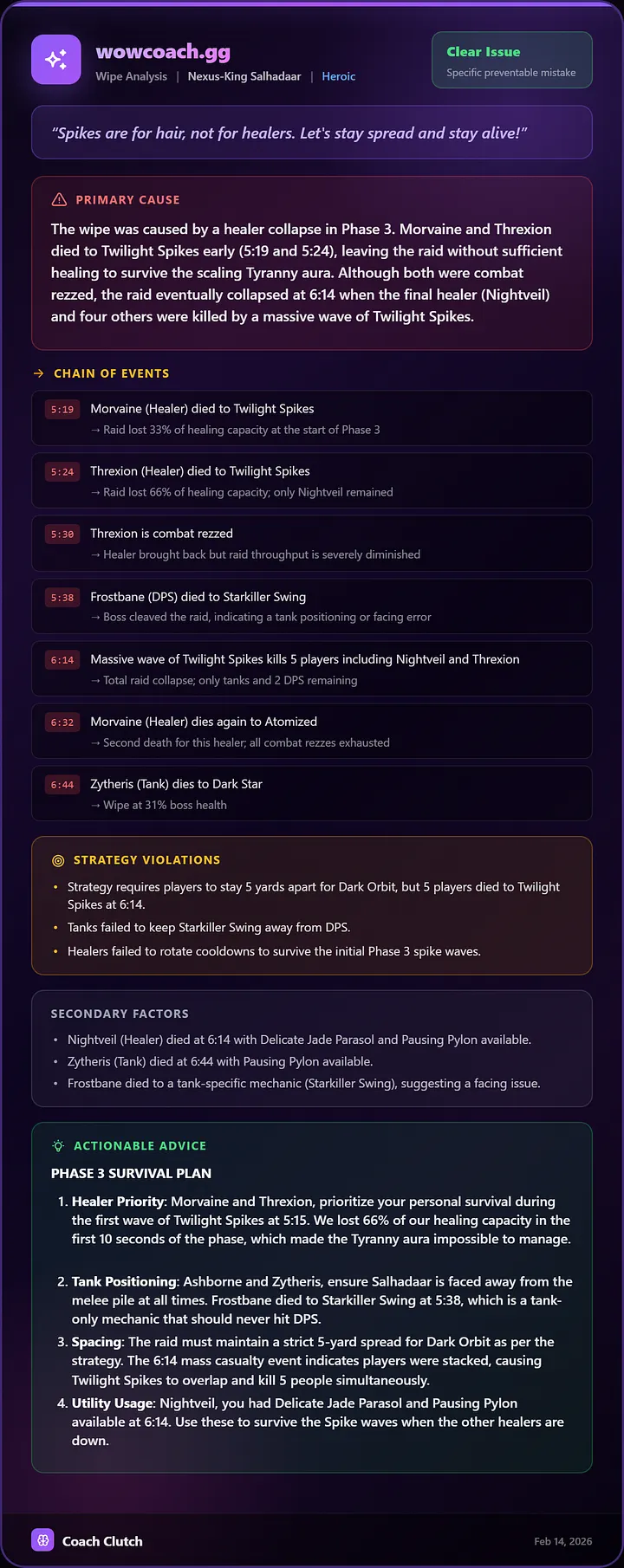

It worked. The coaching was genuinely good. Specific, timestamped, actionable. The AI would trace a chain of events — first death caused a healing gap, which caused a second death, which snowballed into a wipe — and explain it in a way that made sense.

Then I looked at the bill.

At $3.00/M input tokens and $15.00/M output tokens, each query was costing $0.10–0.20. If a user asks 10–50 questions during a session, that's $1–10 in AI costs per user per night. For a product I was planning to charge $5.99–12.99/month, the math was impossible.

I needed to get costs down by at least 10x. I ended up getting them down by over 95%. It took four separate rounds of optimization, and the first one was embarrassing.

The Logs I Should Have Checked

I'd enabled prompt caching, which should have dramatically reduced costs for repeated content. Weeks went by. Then I finally checked the token usage logs and saw: Input: 1727 (cache: 0).

Zero cache hits. For weeks.

The problem was prompt structure. I had dynamic content (the user's question, combat data) mixed in with static content (system prompt, tool definitions). Prompt caching requires the cacheable content to be a contiguous prefix — everything static at the top, everything dynamic at the bottom. I'd been re-processing the entire system prompt on every request.

The fix took 20 minutes. If I'd checked my logs on day one, I'd have saved weeks of wasted money.

The Provider Switch That Almost Didn't Happen

Next came the big lever. Gemini 2.5 Flash launched at $0.15/M input and $0.60/M output — roughly 20x cheaper than Claude Sonnet on Bedrock. For my use case — reading structured data and producing coaching responses — the quality was comparable.

Because I'd built a provider-agnostic interface from the start (shared abstraction for chat completion with tool use), switching the primary provider was an environment variable change. Not a rewrite. I keep Claude on Bedrock as a fallback.

But pricing wasn't even the main reason I switched. Rate limits were.

AWS Bedrock started me at 2 requests per minute. Two. For a product where a single user session might generate 10–20 AI queries. I submitted a quota increase request and waited. And waited. It took a month to get approved for 200 requests per minute — better, but still not enough for production with concurrent users.

Anthropic's direct API capped me at 50 requests per minute with no clear path to increase it.

Gemini? 1,000 requests per minute out of the box. No phone calls, no quota requests, no waiting. When you're a solo developer with no enterprise sales relationship, default rate limits determine which providers you can realistically build on. This doesn't show up in any pricing calculator, but it nearly killed the project before costs did.

The Architecture That Actually Solved It

The real breakthrough was moving from monolithic prompts to a tool-based architecture.

The original approach — dump all fight data into context and let the AI sort it out — was wasteful. A monolithic prompt for a complex fight could be 40–100k tokens. Most of those tokens were irrelevant to whatever the user actually asked.

The tool-based architecture flipped this. Instead of front-loading data, the AI starts with minimal context and decides what it needs. It sees 25+ available tools — get_death_recap, get_ability_usage, get_mechanic_failures, get_player_performance — and selects 2–4 relevant ones per question.

"Why did I die?" fetches the death recap. "Why did we wipe?" starts with get_death_recap, discovers the first death was the tank, then calls get_mechanic_failures to check if a swap was missed. The AI reasons through it step by step, pulling data as needed.

Token usage dropped 70–85%. And because the AI only sees relevant data, accuracy actually improved. Less noise means better answers.

A typical coaching conversation now costs a fraction of what it started at — over 95% cheaper than the first implementation. The fourth round of optimization targeted AI thinking tokens, tuning reasoning depth per feature so the model doesn't overthink structured tasks. That's the difference between a product that bleeds money and one that works.

The Feature I Didn't Plan to Build

The original vision was simple: upload a log, get AI coaching. Then actual users showed up.

One of my early alpha testers — a well-known figure in the WoW tools community — spent three hours exploring the platform on his first visit. He gave me specific feedback, pointed out edge cases, suggested improvements. And then he asked for something I hadn't considered: an instant fight replay.

The idea: after a wipe, the raid leader could replay exactly what happened — every player's position, every mob, animated on the actual game map — and draw on it in real time. Annotations, arrows, circles. Like Miro for raid strategy, but on top of real fight data. Shareable via link. Anyone who connects sees the replay and the drawings update live.

It was not a small ask.

But it was the right ask. This is how raid leaders actually work during progression. They wipe, they need to figure out what went wrong, they need to communicate positioning changes to 20 people over Discord. They're doing this with verbal descriptions and crude drawings. The existing tools don't help here.

So I built it. The fight replay pulls position data from the parsed combat log, transforms coordinates to map space, and animates them on a canvas. The collaborative drawing layer uses WebSockets — every connected viewer sees the same playback state and each other's annotations in real time. A raid leader can pause the replay at the moment of a wipe, draw "don't stand here" arrows, and 20 people see it instantly.

It shipped in three days.

That feature — along with automatic wipe analysis that runs between pulls and pushes results to Discord — changed what WowCoach is. It stopped being "a tool you check after raid night" and became "a tool you use during raid night." The desktop companion app detects when a fight ends, uploads the log, triggers the parse, runs AI analysis, and pushes the wipe summary to the raid's Discord channel. All automatic. The raid leader sees what went wrong before the next pull timer expires. (Curious what the AI side actually says? Meet Coach Clutch.)

I didn't plan any of this. I built what users told me they needed for Mythic progression. And it turns out that building fast — being able to ship a complex real-time feature in days instead of months — is the only competitive advantage a solo developer has.

Building with Claude Code

That velocity comes from somewhere. This project wouldn't exist at its current scope without AI-assisted development — specifically, Claude Code, Anthropic's terminal-based coding agent.

Claude Code isn't autocomplete. It reads your codebase, understands your architecture, and makes changes across multiple files. I describe a feature — "add a WebSocket endpoint that broadcasts fight replay state to connected viewers, with collaborative drawing events" — and it plans the implementation across CloudFormation templates, .NET backend services, and the Next.js frontend.

The compounding effect is real. By month three, it understood my naming conventions, my CloudFormation patterns, my repository structure. It stopped writing generic code and started writing my code.

Where it struggled: it hallucinated combat log field layouts (undocumented formats aren't in anyone's training data), proposed architectures that wouldn't survive Lambda's constraints, and sometimes "improved" working code with unnecessary abstractions. The fix was always the same: be specific about constraints. "This runs on Lambda with a 15-minute timeout and 10GB memory. The file streams from S3 using range requests. Don't load it into memory." Constraints produce better AI output than open-ended asks.

Six months of nights and weekends produced 8 Lambda functions, 41 DynamoDB tables, a Next.js frontend, a Tauri desktop app, real-time collaborative features, and a CI/CD pipeline. It also produced a Texas LLC, an EIN I had to chase down from the IRS during a partial government shutdown, and a payment processor that needed a hand-signed ownership structure document for a single-member LLC. And a code signing certificate that nearly broke me.

That last one deserves its own paragraph. To distribute a desktop app on Windows without terrifying "Unknown Publisher" warnings, you need a code signing certificate. To get one under your business name, the certificate authority needs to verify your LLC exists. My LLC was two months old. Azure's identity verification rejected my Texas driver's license entirely — hard blocked, no workaround. I moved to a third-party CA, but they needed my phone number listed in an approved business directory. D&B gave me system errors. ZoomInfo turned out to be enterprise sales software. My LLC was too new to appear in any directory. I ended up hiring a lawyer to write a legal opinion letter confirming that yes, WowCoach LLC is a real company and I'm the real owner. A lawyer. To sign a desktop app.

That's the part nobody warns you about. The code is the fun part. The business infrastructure is the grind.

What I Learned

Check your AI logs on day one. I ran for weeks with zero prompt cache hits because of a structural mistake. That's money on fire.

Stop chasing the last 1%. I spent weeks matching the incumbent's numbers exactly, debugging edge cases that turned out to be their inconsistencies. Ship at 99% and move on.

Let the AI fetch, don't force-feed it. Tool-based architectures beat monolithic prompts on cost, accuracy, and extensibility. If you're stuffing everything into context, stop.

Build the provider abstraction early. When Gemini Flash dropped and cut my costs 20x, I migrated in a day. If I'd been locked to one provider's SDK, that would've been a month.

Ship fast and listen. The features that matter most — real-time wipe analysis, collaborative replay, Discord integration — weren't in my original plan. They came from watching how people actually use the tool. Build what they need, not what you imagined.

The Question I Still Can't Answer

I can tell you the parser is accurate. I can tell you the AI costs are sustainable. I can tell you the architecture scales. What I can't tell you is whether people will pay for it.

The incumbent tool is free for most players. It has a decade of trust, millions of users, and deep integration into the community. I'm charging a monthly subscription for something that overlaps with what they already have — and betting that AI coaching, real-time wipe analysis, and collaborative strategy tools are enough differentiation to justify it. (For the honest side-by-side, see WarcraftLogs vs WoWAnalyzer vs WowCoach.)

Some nights I'm convinced it is. The AI gives answers that take experienced players 20 minutes of manual log reading to find. The real-time features solve problems no other tool even attempts. Other nights I stare at the pricing page and wonder if I'm delusional — if players will try it once, think "cool," and go back to what they know.

I don't have an answer yet. But the AI doesn't have to be expensive. The hardest part is still the same thing it's always been: building something people actually want to use.

Brian Morale is Founder & Chief Architect of WowCoach.gg. With 20+ years in enterprise software architecture, he builds systems that scale — whether it's financial platforms by day or AI coaching engines by night.

Related topics